The modern corporate landscape has undergone a seismic shift, moving from static on-premises data centers to the dynamic, scalable world of cloud computing. Initially positioned as a cure-all for infrastructure limitations, the cloud promised near-infinite uptime and geographic redundancy. Yet recent high-profile outages across major service providers have exposed a more complex reality.

When a centralized cloud node fails, the ripple effects can disrupt thousands of businesses simultaneously—triggering revenue loss, operational paralysis, and erosion of customer trust. While infrastructure management may sit with the provider, accountability for operational resilience remains firmly with the enterprise.

Forward-thinking organizations now recognize that cloud migration is not simply a technical upgrade—it is a fundamental shift in how business value is delivered and protected. At STL Digital, we recognize that the transition from legacy infrastructure to the cloud is not merely a technical migration but a fundamental shift in how value is delivered and protected.

The Resilience Gap in Modern Cloud Architectures

Cloud-native architectures built on microservices, containerization, and serverless computing have unlocked remarkable agility. However, they have also introduced unprecedented complexity.

In monolithic systems, failure points were easier to isolate. In modern distributed ecosystems, a single customer transaction may traverse dozens of independent services—each with distinct latency patterns and failure modes. This interdependency creates a “blast radius” effect where minor faults can escalate into widespread system outages.

Business continuity in this environment demands a new definition of success. A system is no longer successful simply because it is “up.”It needs to be performant, secure and have the ability to gracefully degrade when stressed.

It is the gap between what an architecture can effectively provide during crisis conditions and what the architecture is supposed to provide under its conditions that may be termed as the resilience gap. This gap is no longer a question of choice, but a strategic necessity in a digital-first economy.

The Economic Cost of Downtime

The urgency behind resilience is rooted in financial reality. Downtime is not any longer counted by thousands, but by millions.

According to Gartner, worldwide public cloud end-user spending is projected to approach $723.4 billion in 2025 and 90% of Organizations Will Adopt Hybrid Cloud Through 2027. The scale of the industry is breathtaking, and recent projections suggest it will only accelerate. According to Statista Revenue in the Public Cloud market worldwide is projected to reach US$1.19tn in 2026.

Similarly, International Data Corporation forecasts that global Worldwide Spending on Public Cloud Services is Forecast to Double Between 2024 and 2028. Nevertheless, this booming growth tends to overshadow an important truth: when it comes to the high-priority applications, the cost of outage may reach over $100,000 per hour, not counting the long term brand devaluation or legal fines.

With digital channels being the main profit making engines, building a more resilient foundation through robust Cloud Services is equated to making profit. Resilience is no longer an IT measure—it is a board measure.

From Quality Assurance to Quality Engineering

Traditional Quality Assurance (QA) was largely reactive—a final checkpoint before release. In a world defined by CI/CD pipelines and rapid deployment cycles, that model is obsolete.

Quality Engineering (QE) denotes a forward-looking development. Instead of testing quality at the end, QE incorporates quality at all software lifecycle stages. It incorporates automated testing, performance checks, security checks and resilience checks into development pipelines.

An effective QE framework is based on a single principle which is: prevention is better than detection. Rather than the organizations quantifying the bugs after the release, they quantify availability, system performance, and Mean Time to Recovery (MTTR).

Quality Engineering is the working blood of an organization, and by being integrated into a Digital Transformation Strategy, it will be a heartbeat to ensure that innovation does not ruin the stability.

Technical Pillars of Cloud Resilience

Resilient cloud environments are not achieved through one-time upgrades but through continuous engineering discipline. Several foundational pillars define this approach:

1. Chaos Engineering: Designing for Failure

Controlled disruption is one of the best methods of resilience validation. Chaos Engineering is a method of applying stress to a system by purposely introducing faults, e.g. by terminating services or introducing latency.

This will reveal latent dependencies and authenticate the failover mechanisms prior to real-life incidents. This practice is essential for any high-maturity IT Solutions and Services program as the aim is no longer to avoid all failures but rather to be able to survive them.

Companies that embrace this attitude create systems that are resilient to volatility in the real world as opposed to crumbling because of volatility.

2. Observability and Predictive Insight

Response Notifications indicate to monitoring that something has gone wrong. Observability explains why.

The correlation of logs, metrics, and distributed traces can help engineering teams gain substantial insight into system behavior. Observability, in combination with predictive analytics, enables an enterprise to preliminarily predict failures before they can affect a customer.

This change of paradigm, between reactive firefighting and proactive prevention, is the new operational excellence.

3. Automated Performance and Load Testing

Availability is equal to performance in the cloud environment. An ineffective system is one whose implementation is slow to respond or fails to offer the expected promptness.

Automated load and stress testing are used to ensure that during peak demand, Cloud Services are responsive. In industries with predictable but high traffic surges like retail and finance, this discipline is highly important for safeguarding brand credibility

The Cultural Shift: Site Reliability Engineering

The use of technology cannot ensure resilience. Culture is equally very critical.

Site Reliability Engineering (SRE) is an interface between operations and development, implementing software engineering concepts to infrastructure engineering. It supports a culture of joint responsibility on the reliability within DevOps teams and a blameless culture during post-incident reviews.

Developers are more likely to develop strong systems when they realize that they are responsible for performance of the code in live systems. When repetitive jobs are automated by operations teams, they are concerned with architectural enhancements as opposed to manual ones.

Resilience becomes a shared responsibility—not a siloed function.

Integrating Security into the Quality Loop

Building on the current scenario, one of the most disruptive operational risks is the cyber incidents. To this end, Quality Engineering should incorporate security into the development pipelines through DevSecOps practices.

Automated vulnerability scanning, checking of compliance, checking of encryption, and monitoring of security at runtime are security features that make sure that protection is not periodic.

Security is always listed as the number-one cloud issue by IT executives. One unpatched container vulnerability may lead to prolonged operational downtimes and regulatory risks.

Security is a quality attribute in resilient architectures not an afterthought.

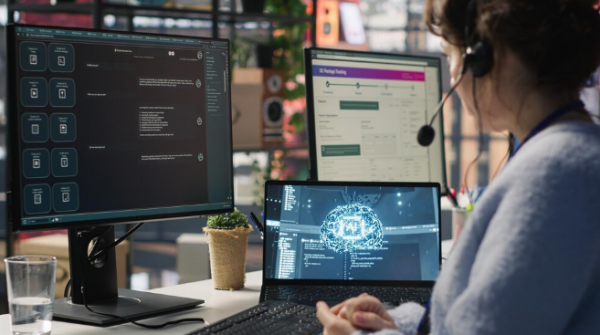

The Role of Artificial Intelligence in Modern QE

With the growth of cloud ecosystems beyond human management, Artificial Intelligence is proving to be a force multiplier of Quality Engineering.

AI-driven testing platforms can:

- Priority and automated generation of test cases.

- Monitoring the abnormalities of traffic trends.

- Hypothesize high performance constraints.

- Propose remedial measures.

The AI systems can discover patterns that otherwise would have been overlooked by human teams because of the sheer number of telemetry data to analyze. This capability enables “generative quality”—where systems continuously optimize themselves based on operational insights.

For enterprises managing large digital portfolios, AI-enhanced QE enables innovation at speed without sacrificing reliability.

Resilience as Competitive Advantage

Reliability is a direct determinant of customer loyalty in a digital first market place. There is a zero tolerance of service disruptions among the consumers. A single major failure will forever change users’ behavior to competitors.

Organizations that consistently deliver seamless, always-on digital experiences transform reliability into a competitive differentiator. The brand promise involves resilience.

Quality Engineering gives the roadmap towards attaining this standard. To achieve this, organizations should partner with experts who offer comprehensive IT Consulting to turn IT infrastructure into a strategic growth maker rather than a cost center. The brand promise involves resilience.

Conclusion

Cloud outages can be a reality of modern times, but should not be a lethal blow to business processes. By utilizing the strict implementation of Quality Engineering, businesses will be able to develop the so-called digital armor that will enable them to enter the world of modern technology and survive in it. With the emphasis on predictive resilience, observability, and the culture of the never-ending improvement, a healthy Digital Transformation Strategy will guarantee your company will stay afloat and remain relevant, regardless of technical constraints. As the cloud continues to evolve, so too must our approach to protecting the value it creates.

At STL Digital, we provide the strategic oversight and technical expertise necessary to turn resilience into a reality. Our approach to Quality Engineering is designed to help enterprises build “anti-fragile” systems—systems that don’t just survive stress but actually get better because of it.